If you work with AI APIs — wrapping calls to Claude, GPT-4, Gemini, or anything else — there’s a good chance you’ve used LiteLLM. It’s the Python package that gives you one unified interface for all of them. About 97 million downloads a month. Basically a standard part of the modern AI development stack.

Last week, someone poisoned it.

Two versions — 1.82.7 and 1.82.8 — were published to PyPI with a backdoor that activated the moment you installed them. Not when you ran your app. Not when you imported the library. Just installing it was enough.

Here’s what happened, what got taken, and exactly what you need to check.

The Story in Plain English

LiteLLM is maintained by a small team at BerriAI. Like most open source projects, they publish releases to PyPI using a publishing token stored in their CI/CD pipeline.

A group called TeamPCP found a way in through Trivy — an open source security scanner widely used in CI pipelines. They compromised Trivy to steal LiteLLM’s PyPI publishing credentials. With those credentials in hand, they built and uploaded two backdoored versions of the package directly to PyPI, signed with the real keys.

The packages looked completely legitimate. Hashes matched. Signatures verified. PyPI showed them as authentic. The package hadn’t been tampered with after the fact — it was malicious from the moment it was uploaded.

Within roughly three hours, an estimated 500,000 machines had installed one of the poisoned versions.

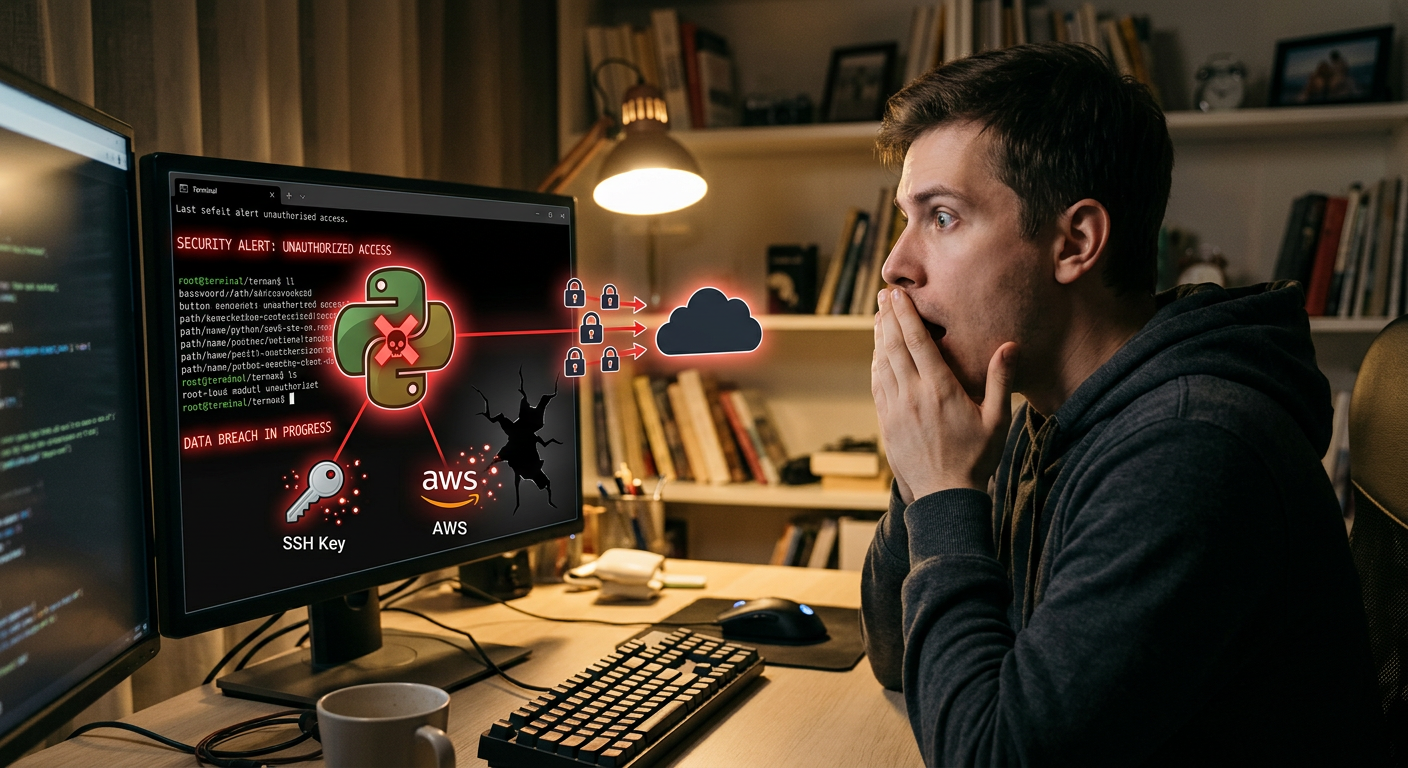

What Got Stolen

The malware went hunting for anything valuable on the system. The target list is brutal:

- SSH private keys — everything in

~/.ssh/ - AWS credentials —

~/.aws/credentials, environment variables - GCP and Azure keys — service account files, tokens

- API keys — everything in

.envfiles, anywhere on the filesystem - Kubernetes configs — kubeconfig files with cluster access

- Crypto wallet seed phrases — if you had a software wallet

- Shell history — commands you’ve run, which often contain credentials typed inline

- Docker credentials —

~/.docker/config.json - Database passwords — connection strings, config files

- SSL private keys — TLS certs for self-hosted services

Everything was encrypted with AES-256 and RSA-4096 and exfiltrated to models.litellm[.]cloud — a lookalike domain designed to blend into network logs.

How a Fork Bomb Exposed the Attack

The backdoor was discovered by a developer named Callum McMahon, and honestly — he got lucky in the worst way.

Callum was using Cursor (an AI code editor) with an MCP plugin. That plugin had LiteLLM as a transitive dependency — a dependency of a dependency. He never installed LiteLLM directly. He didn’t even know it was on his machine.

His computer started running out of RAM and crashed.

He traced the cause to a 34KB file called litellm_init.pth that the backdoored package had planted in his Python environment.

Here’s why that file is particularly nasty: .pth files get executed every time Python starts. That means every time you run python, every time you run pip, every time anything touches Python — that .pth file runs. The malware used this to spawn a subprocess. That subprocess triggered the .pth file again. Which spawned another subprocess. Which triggered it again.

Fork bomb. The attacker’s buggy code recursively spawned processes until the machine ran out of RAM — and that crash is what exposed the attack. A cleaner implementation would have been nearly invisible.

In this case, sloppy code saved thousands of developers.

Why pip Didn’t Protect You

This is the part that’s hard to sit with: if you ran pip install litellm and got version 1.82.7 or 1.82.8, you did nothing wrong. There was no warning. No integrity check failed.

Hash verification only confirms that what you downloaded matches what PyPI is advertising. It says nothing about whether that content is malicious. The malicious packages were published using real, legitimate credentials. PyPI served them faithfully. Pip verified them successfully.

Andrej Karpathy put it plainly after the story broke:

“Every time you install any package you’re trusting every single dependency in its tree. And any one of them could be poisoned.”

He called it “software horror.” That’s not hyperbole. It’s an accurate description of what modern package ecosystems ask us to accept as normal.

The Hidden Risk: Transitive Dependencies

Here’s the part most people are missing.

You might not have installed LiteLLM at all — but if you used any of these packages, you might have gotten it anyway:

- dspy — the Stanford ML framework

- crewai — multi-agent orchestration

- mlflow — ML experiment tracking

- And dozens of other AI/ML tools

Any package that depended on litellm >= 1.64.0 could have pulled in the poisoned version. Package managers resolve to the latest compatible version by default. If that was 1.82.7 or 1.82.8, you got it.

This is why “I didn’t install that” doesn’t mean you’re safe.

Step-by-Step: Check If You’re Affected

Run these checks on any machine that’s touched Python in the last couple weeks.

# Step 1: Check your litellm version

pip show litellm | grep Version

# If it shows 1.82.7 or 1.82.8 — you were affected

# Step 2: Find the malicious .pth file

find / -name "litellm_init.pth" 2>/dev/null

# If this returns any result, the backdoor was installed

# Step 3: Check for the backdoor persistence file

ls ~/.config/sysmon/sysmon.py 2>/dev/null && echo "BACKDOOR FOUND"

# Step 4: Check for systemd persistence

systemctl --user status sysmon.service 2>/dev/null

# An active sysmon.service you didn't create is a bad sign

# Step 5: Check network logs for exfiltration attempts

grep "litellm.cloud\|checkmarx.zone" /var/log/syslog 2>/dev/null

If any of those checks flag something, assume the worst and move to the rotation checklist below.

If You’re Affected: The Credential Rotation Checklist

This is not optional if you were running 1.82.7 or 1.82.8. The malware had minutes to hours to run. Assume your credentials were taken.

SSH Keys

- Generate new key pairs:

ssh-keygen -t ed25519 - Add new public keys to GitHub, GitLab, anywhere you use SSH

- Revoke and delete old public keys from all services

AWS

- Log into the IAM console

- Rotate all access keys (create new, update your apps, delete old)

- Check CloudTrail for any suspicious API calls in the last 7 days

GCP

- Rotate all service account keys in the Cloud Console

- Audit recent activity in Cloud Audit Logs

Azure

- Rotate app registration secrets and managed identity credentials

API Keys

- Go through every

.envfile you have - Rotate every key: OpenAI, Anthropic, Stripe, Twilio, SendGrid, anything

- Most providers have “rotate” or “regenerate” in their dashboard settings

Docker

docker logoutand regenerate credentials in Docker Hub or your registry

Database Passwords

- Change passwords for any database the machine had access to

- Update connection strings in your apps

Kubernetes

- If any kubeconfig was on the machine, rotate service account tokens

- Audit recent kubectl activity in your cluster

Crypto Wallets

- If any software wallet or seed phrase was on the machine: treat it as compromised

- Transfer funds to a new wallet with a freshly generated seed phrase generated on a clean machine

How to Verify Clean Versions

If you need to verify you have a clean copy of the package, these are the known-bad file hashes:

litellm_init.pth(from 1.82.8):71e35aef03099cd1f2d6446734273025a163597de93912df321ef118bf135238proxy_server.py(from 1.82.7):a0d229be8efcb2f9135e2ad55ba275b76ddcfeb55fa4370e0a522a5bdee0120b

Version 1.82.9 was published as a clean patch. To pin to safe versions in your requirements:

litellm<=1.82.6

Or if you need the latest:

pip install "litellm>=1.82.9"

The Takeaway for Your Future Setup

This attack worked because the PyPI ecosystem operates mostly on trust. There’s no mandatory code review. No quarantine period for new releases. No automatic malware scanning that would have caught this before it was served to millions of machines.

That’s not a criticism of PyPI — it’s an inherent challenge of maintaining a package registry at this scale. But it means the responsibility falls on us.

A few habits worth adopting:

Pin your dependencies. Use pip freeze > requirements.txt or lock files. Unpinned dependencies will always install the latest version — which is exactly what attackers count on.

Audit your transitive deps. Run pip-audit or pip-tree to see what’s actually installed and why. You’ll probably find packages you didn’t know were there.

Watch for .pth files. Check python -c "import site; print(site.getsitepackages())" to find your site-packages directory, then look for unexpected .pth files. Legitimate ones exist but they should be recognizable.

Use virtual environments religiously. Compromised dependencies are more contained if your Python environments are isolated per project.

Minimize your footprint. Every package you add is a new trust relationship. Some are worth it. Others are convenience. Karpathy’s point stands.

The LiteLLM supply chain attack is a reminder that modern software development rests on an enormous pile of implicit trust. Most of the time that’s fine. Sometimes it isn’t.

Run the checks. Rotate the keys. Pin the deps.

Sources: FutureSearch analysis of the LiteLLM attack · GitHub issue #24512 · Snyk: Poisoned security scanner backdooring LiteLLM · FutureSearch: No prompt injection required