Your company just deployed five AI agents. One handles customer support tickets. One triages security alerts. One manages infrastructure scaling. One processes invoices. One writes and deploys code.

They all use the same service account.

If that sentence didn’t make you flinch, you need to read this article. If it did — congratulations, you understand the problem. Now let’s talk about why 43% of companies are doing exactly this, and why it’s one of the most dangerous identity anti-patterns in modern enterprise security.

The Stat That Should Keep CISOs Awake

According to recent industry surveys on AI agent deployment practices, 43% of organizations use shared service accounts for their AI agents. Not per-agent credentials. Not ephemeral tokens. Not scoped service principals. A single, fat, long-lived service account that multiple autonomous AI systems share like a family Netflix password.

This isn’t some obscure misconfiguration buried in a startup’s AWS account. This is happening at Fortune 500 companies, government agencies, and critical infrastructure operators. The AI agent gold rush has outpaced identity governance so completely that nearly half of enterprises are running autonomous systems with the identity equivalent of a Post-it note on a monitor.

Let’s unpack why this is fundamentally, catastrophically broken.

Why Shared Service Accounts Were Already Bad (Before AI Made Them Worse)

Shared service accounts have been a security anti-pattern for decades. Every auditor who’s ever looked at an Active Directory environment has flagged them. Every compliance framework discourages them. And yet, they persist — because they’re easy.

The classic problems are well-documented:

- No audit trail attribution: When five services share

svc-platform-prod, your SIEM logs show activity from that account, but you can’t tell which system took the action. Was it the backup job or the data pipeline? Was it scheduled maintenance or a lateral movement attempt? You don’t know. - Blast radius amplification: Compromise one system that uses the shared account and you’ve effectively compromised all of them. The attacker inherits every permission granted to every service that shares those credentials.

- Privilege creep on steroids: Each service needs slightly different permissions. Over time, the shared account accumulates the union of all of them. Nobody removes permissions because nobody knows which service needs what. The account becomes a god account by accretion.

- Credential rotation nightmares: To rotate the password or key, you need to coordinate across every system using it simultaneously. In practice, this means the credentials never get rotated. Ever.

These problems are bad enough when the “services” in question are cron jobs and batch processors — deterministic systems that do the same thing every time they run. But AI agents aren’t cron jobs. They’re something fundamentally different.

AI Agents Are Not Traditional Service Accounts (Stop Treating Them Like They Are)

Here’s where the industry is making a critical conceptual error. Teams are deploying AI agents and plugging them into existing service account infrastructure as if they’re just another microservice. They’re not. AI agents differ from traditional automated services in ways that make shared identity models exponentially more dangerous.

They Act Autonomously and Non-Deterministically

A traditional service account runs a script. The script does the same thing every time. You can predict its behavior, scope its permissions precisely, and audit its actions against a known baseline.

An AI agent decides what to do. Given the same input, it might take different actions depending on context, prior interactions, model updates, or even stochastic variation in inference. You can’t predict its behavior with certainty, which means you can’t pre-scope its permissions with precision. This is exactly when you need more identity granularity, not less.

They Chain Actions Across Systems

Traditional service accounts typically interact with one system, or a small set of systems in a predictable pattern. AI agents chain actions. A security triage agent might read an alert from your SIEM, query your CMDB for asset context, check your vulnerability scanner for related findings, look up the asset owner in your directory, draft a notification, and create a ticket — all in a single autonomous workflow.

When that entire chain runs under a shared service account, every system in the chain sees the same identity. There’s no way to distinguish the security agent’s CMDB query from the invoice agent’s CMDB query. Your access logs become meaningless noise.

They Learn and Evolve

AI agents get updated. Their models change. Their system prompts get modified. Their tool access gets expanded. A shared service account that was “good enough” three months ago might now be powering an agent with completely different capabilities and risk profile. But the identity layer doesn’t know that. It still sees svc-ai-agents hitting the same APIs.

They’re a Prime Target for Prompt Injection and Manipulation

AI agents are uniquely vulnerable to input-based attacks. Prompt injection, indirect prompt injection via data sources, and adversarial inputs can cause an agent to take actions its operators never intended. When that agent is operating under a shared service account with aggregated privileges, the blast radius of a successful prompt injection extends across every permission that account holds.

Imagine an attacker who manages to inject a prompt into a customer-facing support agent. If that agent shares credentials with an infrastructure management agent, the attacker might be able to pivot from “tell me my account balance” to “modify this production configuration” — all under the same identity, with no anomaly detection flagging the lateral movement because it’s all the same account.

Real-World Attack Scenarios: What Happens When It Goes Wrong

Let’s walk through three realistic scenarios that illustrate why shared AI agent service accounts are a ticking time bomb.

Scenario 1: The Compromised Support Agent

Setup: A company runs a customer-facing AI support agent and an internal HR data processing agent. Both use svc-ai-platform which has read access to the customer database, the HR system, and the ticketing platform.

Attack: An attacker crafts a prompt injection through a customer support ticket. The support agent, following the injected instructions, begins querying the HR system for employee salary data and PII. Because the shared service account has legitimate access to both systems, no access controls fire. The SIEM sees svc-ai-platform querying HR — which it does every day when the HR agent runs. Nothing looks anomalous.

Impact: Mass data exfiltration of employee records through a customer-facing channel. No audit trail distinguishing the legitimate HR agent’s access from the compromised support agent’s access.

Scenario 2: The Privilege Escalation Chain

Setup: Three AI agents share svc-automation: a code review agent (needs read access to repos), a deployment agent (needs write access to production), and a monitoring agent (needs read access to infrastructure metrics).

Attack: A developer pushes a malicious code change that includes a prompt injection targeting the code review agent. The agent, now under attacker influence, has access to production deployment capabilities because the shared account has deployment permissions (needed by the deployment agent). The attacker uses the code review agent’s compromised session to push unauthorized changes directly to production.

Impact: Production compromise through a code review tool. The deployment agent’s permissions became an attack surface for the code review agent because they shared an identity.

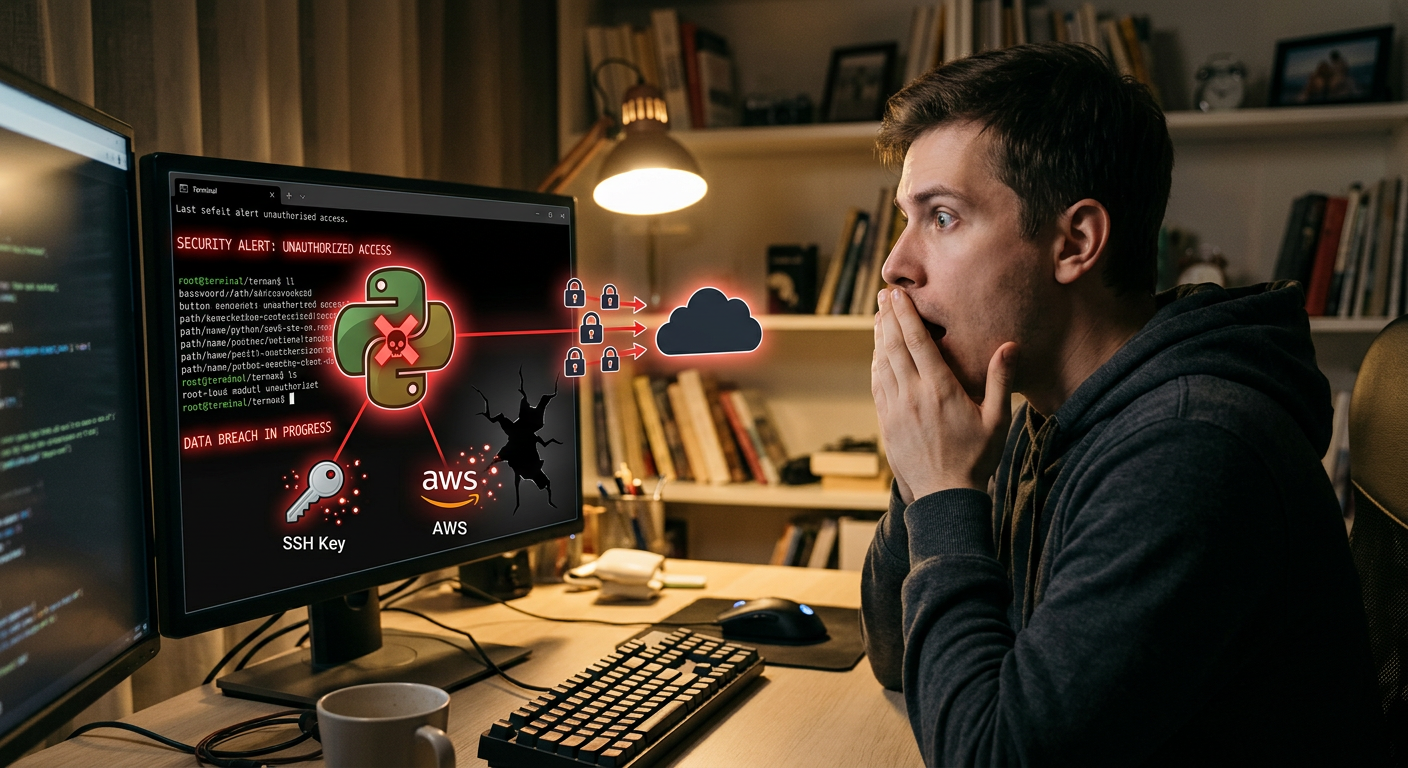

Scenario 3: The Invisible Insider

Setup: A company uses a shared service account for all AI agents. An employee with admin access to the AI platform starts using the agents to exfiltrate data, knowing that their actions will be attributed to svc-ai-platform rather than their personal identity.

Attack: The employee crafts custom prompts that instruct AI agents to query sensitive databases and export results to an external location. Every action is logged under the service account, not the employee’s identity.

Impact: An insider threat that’s virtually undetectable because the human actor is hidden behind a shared machine identity. Even forensic investigation can’t definitively attribute the actions.

The Governance Gap: Nobody Owns This Problem

Here’s one of the most frustrating aspects of the shared service account trap: nobody thinks it’s their problem.

Ask the security team who owns AI agent identity governance. They’ll tell you it’s a DevOps problem — “We don’t manage service accounts for platform tools. That’s infrastructure.”

Ask DevOps. They’ll say it’s a security problem — “IAM policy is owned by the security team. We just need an account that works.”

Ask the AI/ML team. They’ll look confused — “We’re focused on model performance and prompt engineering. Identity? That’s infrastructure.”

Ask IT. They’ll say they weren’t consulted — “Nobody told us they were deploying AI agents. We found out when the service account started hitting systems we didn’t expect.”

This governance gap is precisely why the problem persists. AI agent deployment has fallen into an organizational no-man’s-land where the speed of adoption has outpaced the maturity of governance frameworks. The teams deploying agents optimize for functionality. The teams responsible for identity governance don’t even know the agents exist.

This gap is compounded by the fact that most traditional IAM tools and processes weren’t designed for AI agents. Your PAM solution can vault and rotate the shared service account password, but it can’t enforce per-agent identity. Your IGA platform can certify access for human users, but it has no concept of an “AI agent” as an identity type. Your SIEM can alert on anomalous service account behavior, but it can’t distinguish between five different agents using the same account.

What Good Looks Like: Principles for AI Agent Identity

Fixing this problem requires treating AI agents as first-class identities — not as an afterthought bolted onto existing service account infrastructure. Here’s what a mature AI agent identity model looks like.

1. Per-Agent Identity (Non-Negotiable)

Every AI agent gets its own identity. Not one per “platform.” Not one per “team.” One per agent. If you have five agents, you have five service principals, five sets of credentials, five distinct entries in your identity provider.

This is the foundation everything else builds on. Without per-agent identity, you can’t audit, you can’t scope permissions, you can’t detect anomalies, and you can’t contain incidents. It’s like trying to run a company where every employee uses the same badge — technically functional, operationally insane.

2. Least Privilege, Scoped Per Agent

Each agent identity gets the minimum permissions required for its specific function. The support agent gets read access to the customer database and write access to the ticketing system. The deployment agent gets write access to production infrastructure. The monitoring agent gets read access to metrics. No overlap. No “just in case” permissions.

This requires actually understanding what each agent does — which means maintaining an inventory of agents, their functions, their data access patterns, and their tool integrations. If you can’t describe an agent’s permission requirements in a single paragraph, the agent is probably doing too much and needs to be decomposed.

3. Short-Lived, Scoped Credentials

Long-lived API keys and static passwords are the accelerant on this fire. AI agent credentials should be:

- Short-lived: Hours, not months. Use OIDC federation, workload identity, or similar mechanisms to issue credentials that expire quickly.

- Scoped per session: If an agent needs to access three systems in a workflow, issue three scoped tokens rather than one omniscient credential.

- Automatically rotated: No human-in-the-loop for credential rotation. If a credential lives longer than its rotation period, it gets revoked automatically.

Cloud providers already support this. AWS has IAM Roles Anywhere and STS for short-lived tokens. Azure has Managed Identities and Workload Identity Federation. GCP has Workload Identity. The mechanisms exist — they just need to be applied to AI agents specifically.

4. Rich Audit Logging With Agent Context

Every action an AI agent takes should be logged with:

- Agent identity: Which specific agent took the action

- Action context: What prompt or workflow triggered the action

- Chain of reasoning: Why the agent decided to take this action (as much as the agent can provide)

- Upstream trigger: What initiated the agent’s workflow (a user request, a schedule, an event)

- Session correlation: A way to link related actions across systems in a single agent workflow

This logging enables detection of anomalous agent behavior, forensic investigation after incidents, and compliance demonstration for auditors. Without it, your AI agents are a black box with admin access.

5. Continuous Monitoring and Behavioral Baselines

AI agents exhibit behavioral patterns. The support agent queries the customer database during business hours. The deployment agent pushes code during deployment windows. The monitoring agent reads metrics constantly. Establish baselines for each agent and alert when behavior deviates.

This is where per-agent identity pays dividends. With separate identities, you can build a behavioral profile for each agent and detect when one goes off-script. With a shared account, every agent’s behavior is noise in one undifferentiated signal.

Frameworks and Standards: Where to Anchor Your Program

You don’t have to build your AI agent IAM program from scratch. Several frameworks provide useful guidance.

NIST AI Risk Management Framework (AI RMF 1.0): While not specifically about IAM, the NIST AI RMF’s governance and risk management functions directly apply. The “Map” function emphasizes understanding AI system context and dependencies — which includes identity and access patterns. The “Manage” function calls for controls proportional to risk, which argues for per-agent identity when agents have access to sensitive systems.

OWASP Agentic AI Security: The emerging OWASP guidance on agentic AI security explicitly addresses identity and access management as a core concern. Key recommendations include implementing least-privilege access per agent, using scoped and time-limited credentials, maintaining audit trails that distinguish between agents, and monitoring for behavioral anomalies. Their threat modeling approach for agentic systems treats shared service accounts as a known vulnerability class.

CIS Controls v8: Control 5 (Account Management) and Control 6 (Access Control Management) apply directly. The principle of unique, attributable accounts and least-privilege access doesn’t have a carve-out for AI systems. If your CIS implementation doesn’t cover AI agents, it’s incomplete.

Zero Trust Architecture (NIST SP 800-207): Zero Trust’s core principle — never trust, always verify — applies to AI agents as much as human users. Per-agent identity, continuous verification, and least-privilege access are zero trust fundamentals. If your zero trust program covers human users but not AI agents, you have a zero trust gap.

Practical Steps You Can Take Today

You don’t need a six-month initiative to start fixing this. Here are concrete actions you can take right now.

This Week

-

Inventory your AI agents. List every AI agent running in your environment. Include who deployed it, what account it uses, what systems it accesses, and what permissions it has. You will almost certainly discover agents you didn’t know existed.

-

Identify shared accounts. Flag every service account used by more than one AI agent. These are your highest-priority risks.

-

Check credential hygiene. For each shared account: When was the password last rotated? Who has access to the credentials? Are they stored in a vault or hardcoded? Are they long-lived API keys?

This Month

-

Create per-agent identities for your highest-risk agents. Start with agents that have write access to production systems, access to PII or financial data, or external-facing capabilities. Create individual service principals and migrate them off the shared account.

-

Implement short-lived credentials. Switch at least your highest-risk agents from static API keys to short-lived tokens using your cloud provider’s workload identity features.

-

Add agent context to your logging. Modify your agents to include identifying metadata in their API calls and log entries. Even if you can’t change the service account immediately, adding an agent identifier header to API calls gives you attribution in your logs.

This Quarter

-

Establish an AI agent identity governance process. Define who owns agent identity. Create a registration process for new agents. Require per-agent identity as a deployment prerequisite. Include agent identity in your access certification reviews.

-

Build behavioral baselines. Use your enhanced logging to establish normal behavioral patterns for each agent. Set up alerts for deviations. This is your AI-specific anomaly detection.

-

Integrate with your PAM/IGA platform. Work with your identity tool vendors to support AI agents as a first-class identity type. Most major IAM platforms are adding this capability — ask for it if it’s not available yet.

-

Run a tabletop exercise. Simulate a compromised AI agent scenario. Walk through your incident response process. Can you identify which agent was compromised? Can you revoke its access without affecting other agents? Can you reconstruct what it did? If the answer to any of these is “no,” you know where to focus next.

The Uncomfortable Truth

The shared service account trap persists because fixing it requires coordination across teams that don’t usually collaborate — security, DevOps, AI/ML, and IT. It requires investment in identity infrastructure that doesn’t have an obvious ROI until something goes wrong. And it requires admitting that the speed of AI agent deployment has outpaced governance maturity.

But here’s the thing: the attack surface is growing exponentially. Every new AI agent deployed under a shared service account increases the blast radius of a compromise. Every day those agents run with aggregated privileges is a day an attacker could exploit them. Every audit log entry that says svc-ai-platform instead of identifying a specific agent is a forensic dead end waiting to happen.

The 43% stat isn’t just a benchmark. It’s a prediction of future breach reports. The companies that fix this now will be the ones writing case studies. The companies that don’t will be the ones in the case studies.

Your AI agents aren’t cron jobs. Stop giving them identity like they are.

Want to go deeper on AI agent security? Check out the OWASP Agentic AI Security Project and NIST AI RMF for comprehensive frameworks and guidance.